AI Governance

🧠 ARPIA AI Governance

Interpreting AI Decisions and Results

📌 Purpose

ARPIA’s AI Governance framework ensures that all AI-generated outputs are transparent, traceable, and auditable. It equips security officers, auditors, and compliance teams with the ability to understand how a decision was made, evaluate its reliability, and determine when additional oversight is required.

This capability is essential in regulated and high-risk environments, where organizations must demonstrate not only what an AI system decided, but also how and why it reached that decision.

🌟 Native Transparency Features

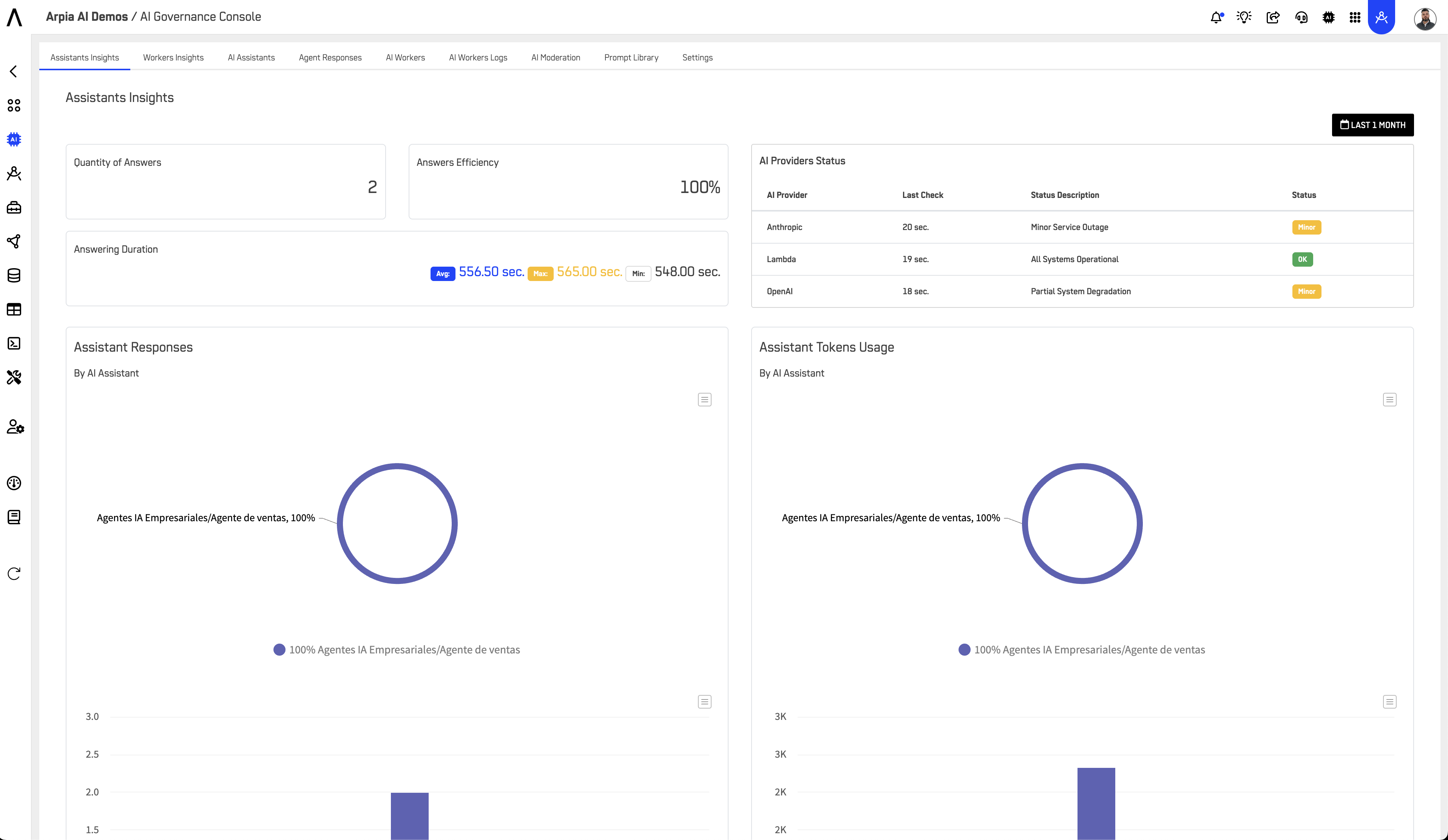

ARPIA embeds governance at the core of its platform. Every AI response is accompanied by metadata that makes interpretation possible:

Confidence Scores (0–100%)

Every response is paired with a confidence score, indicating the system’s degree of certainty. Scores are displayed directly in the Governance Console and stored in logs, forming the basis for risk-aware interpretation.

Full Traceability

All interactions are logged, including the query, the generated response, duration, tokens used, the active model, and the provider. This creates a complete audit trail for reviews and root-cause analysis.

Active Model Visibility

The framework records which model was used (e.g., workarea/xai/grok-2-latest) and its provider, enabling users to interpret results within the context of each model’s capabilities.

AI Provider Health Status

Real-time indicators of provider performance are tracked, helping explain fluctuations in confidence and ensuring that decision quality is not evaluated in isolation.

Together, these features provide auditors with a native interpretability layer that requires no additional integration or third-party tools.

📊 Confidence Scale and Interpretation

Confidence scores are central to how ARPIA supports decision interpretation.

Range Interpretation Oversight Action

- 0–49% (Low): High uncertainty or incomplete data Must be manually reviewed before action

- 50–74% (Medium): Moderate reliability with potential gaps Review recommended, especially for compliance tasks

- 75–100% (High): Strong alignment and consistency Acceptable for most cases, with periodic spot-checks

For compliance-sensitive or regulated processes, ARPIA enforces manual review whenever the confidence score is below 75%, ensuring that low-certainty results cannot be acted upon without human validation.

🔎 Governance in Practice

Governance is more than numbers. By combining interaction logs, model identifiers, provider status, and confidence scores, ARPIA creates a multi-layered explanation framework.

For example, if a financial audit reveals an AI-generated recommendation with a confidence score of 62%, the system not only flags it for review but also provides the surrounding context: the query asked, the model used, the provider’s operational health at the time, and the full interaction history. This enables compliance staff to reconstruct the decision end-to-end and verify whether the uncertainty was due to data gaps, provider issues, or model limitations.

This approach ensures that no AI decision exists as a “black box.” Instead, every response can be explained, verified, and defended.

🛡️ Risk and Compliance Controls

ARPIA integrates governance directly into organizational risk management processes:

Escalation Mechanisms

Responses below the confidence threshold in regulated domains are automatically routed for manual review, ensuring oversight without reliance on user discretion.

Retention and Audit Readiness

All governance data is retained according to configurable policies, guaranteeing availability for audits or investigations. Logs preserve the full decision chain and cannot be altered.

Access Controls

Only authorized personnel can view or export governance data, ensuring sensitive audit records remain secure.

Monitoring and Alerts

Persistent low-confidence patterns or provider degradation trigger automated alerts, enabling proactive resolution before risks materialize.

By embedding these safeguards, ARPIA ensures that AI outputs are not only traceable but also actively managed as part of enterprise compliance programs.

📌 Our Approach to AI Standards and Frameworks

We are SOC 2 Type I certified, demonstrating that our controls for security, availability, and confidentiality are designed and implemented effectively. This certification provides a strong foundation of trust and assurance for our customers and partners.

Beyond this, we align our AI governance practices with leading global frameworks. We adopt the EU AI Act’s principles of transparency, traceability, and human oversight; follow the ISO/IEC 42001 AI Management System Standard to strengthen accountability, auditability, and risk controls; and apply the NIST AI Risk Management Framework to ensure transparency, reliability, and explainability. Together, these measures reflect our commitment to building responsible, trustworthy, and well-governed AI systems.

✅ Outcome

By combining confidence scoring, traceability, provider monitoring, and structured oversight, ARPIA transforms AI from a black-box tool into a transparent, auditable system of record. Security officers and auditors can verify not just what the AI decided, but how and why it reached that outcome — with the assurance that every low-confidence or high-risk result is subject to mandatory human review.

In practice, this ensures that AI-driven processes remain interpretable, accountable, and defensible — fully aligned with modern compliance expectations.

Updated 8 months ago